Beyond the Buzz: How to Turn AI Hype Into Real Business Value

Oct 15, 2025

AI can generate working prototypes in minutes, demo apps are everywhere, and investors are pouring billions into the space. It feels like the future has arrived. People with no coding background are claiming to ship apps in hours, not weeks or months. Sometimes, it seems like the hard problems of building software have all been solved.

But for those that have forged ahead with AI and started building seriously with it, a harder question is emerging: Can AI work at scale?

Right now, many companies aren’t even thinking about ROI. They’re building workflows, automations, and processes on the assumption that ROI will naturally appear. This is a high-stakes gamble. If those systems don’t hold up under pressure, or if costs spiral beyond what budgets can absorb, organizations may find themselves locked into brittle foundations.

In other words, the danger isn’t that AI doesn’t work—it’s mistaking today’s hype for tomorrow’s durability.

The peak—or just a plateau?

The current moment feels a lot like “peak AI.” After all, how do you top a chatbot that writes code, drafts marketing campaigns, or answers legal questions? The wow factor is undeniable, and for many, it feels like we’ve reached the ceiling of what this technology can do.

But history tells a different story. Every major technology has gone through a stage where excitement outpaced reliability. Cloud computing was initially dismissed as insecure. Early mobile apps were mostly fun and games before the iPhone unlocked more serious utility. Blockchain had a frenzy of hype, followed by “crypto winters” before gaining in popularity again. Each technology had to plateau for a period of time before it could mature.

That plateau matters. It forces us to move past novelty and ask tougher questions:

- What can this technology reliably support?

- What should we depend on it for?

- Where are the hidden edges where it fails?

These are the types of questions that guide a technology past the dreaded plateau—from flashy demos to dependable, real-world impact.

From “vibe coding” to serious engineering

Much of today’s AI development lives in what we call “vibe coding,” or the practice of generating code or prototypes with AI without too much concern for quality. It feels magical—you describe what you want, the AI generates it, and suddenly you have a working demo. It’s fast, exciting, and great for proof-of-concept work.

AI-assisted "vibe coding" can be a powerful tool for non-technical founders. It’s great for spinning up a demo or pitch that looks polished on day one. But that early shine hides some real limits: these systems are often fragile, missing documentation, observability, and consistent architecture. When no one fully understands how the AI stitched things together, debugging becomes a nightmare.

In short: AI can help you pitch on day one, but scaling to day 100 is a whole different challenge.

Long story short, they look good on day one but struggle on day 100.

Scaling requires something very different—specifically, engineering discipline. You need monitoring to see how the system behaves in production, rigorous testing to catch failures before they hit customers, compliance processes to avoid regulatory pitfalls, and careful design of the user experience to ensure people understand and trust what the AI produces. These elements are all part of the crucial scaffolding that makes a prototype into a real product.

Here’s a real-world example: We tested an AI system on the pretty routine task of upgrading a UI library for a company’s website. On paper, AI should breeze through a task like that because the rules are clearly documented, the migration steps are straightforward, and the bulk of the work is repetitive and doesn’t require deep domain creativity. In practice, it failed completely—and because no one on the team had the technical expertise to fix it, a minor update turned into a major roadblock. What looked like a neat shortcut quickly became a serious liability.

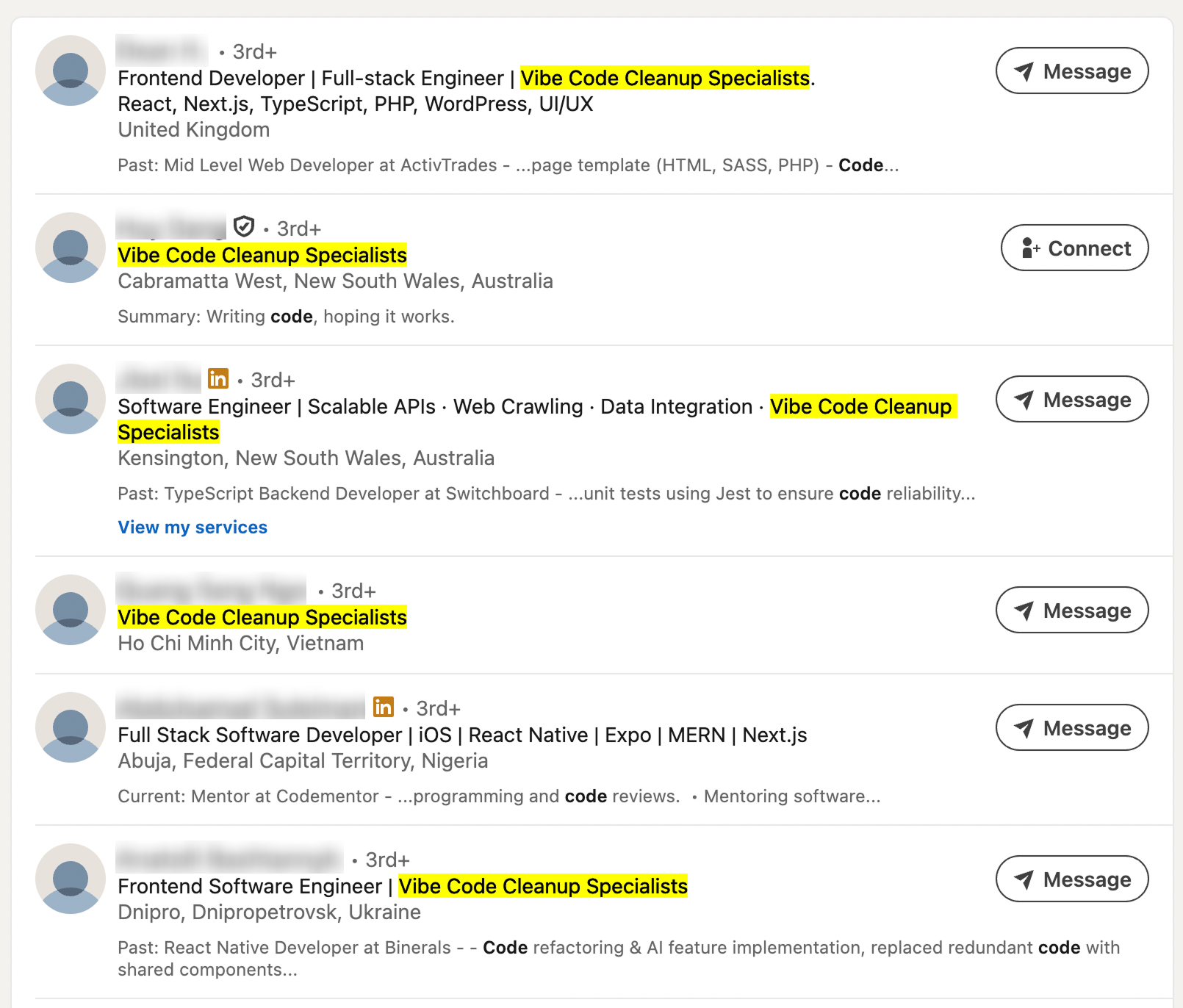

Some organizations already see this risk coming. They hedge by keeping an engineer on payroll whose sole job is to swoop in when AI-generated workflows inevitably break down. You’ll even find tongue-in-cheek LinkedIn titles like "Vibe Code Clean-Up Specialist:

But that’s treating the symptom, not the cause. The real issue isn’t that AI prototypes collapse—it’s that they were never engineered to scale in the first place. Far from making engineers obsolete, this reality only increases their value. They’re the ones who can architect durable systems from day one, so you don’t need a rescue crew on day 100.

A pause, not an endgame

This moment isn’t a ceiling—it’s simply a checkpoint. The demos have proven what’s possible. Now comes the harder, but more important, part: making it sturdy.

AI is great at certain things: synthesizing research, generating creative drafts, making broad estimations. But engineering requires a different kind of expertise. It requires precision, rigor,

Take one founder we’ve seen: AI-powered vibe coding helped him pull together a slick prototype, which made it easier to get early attention. But it also gave him more than he could realistically handle. The polished interface borrowed from familiar patterns, but underneath the integrations never really held together. If he had kept the prototype narrower in scope, he might have avoided burning through both time and money. Instead, with AI making it easy to push further, he didn’t see where the guardrails should have been.

That’s the trap. AI can spin up screens, flows, and even snippets of code that feels production-ready. But without experienced engineers to set constraints, catch edge cases, and build sound integrations, you’re left with brittle systems that collapse under real use. The real challenge isn’t seeing how far AI can go on its own, it’s knowing when to pair it with human expertise. Teams that strike this balance turn AI into an accelerator instead of a liability.

Why now matters

Teams are already running into AI’s limits: rising costs as models scale, brittle outputs that fail under load, compliance concerns regulators are only starting to enforce. The early thrill of rapid prototyping is giving way to the more sobering operational reality of sustaining those prototypes in production.

Leaders are at a fork in the road:

- Double down on flashy experiments, chasing the hype cycle while hoping the foundations hold, or

- Invest in durable infrastructure, building oversight, testing, and hybrid workflows where AI and human expertise reinforce each other.

The ROI reckoning is coming. Companies can’t afford to keep stacking workflows under the assumption that AI will “just work.” The winners will be those who invest in sturdiness and the durable value layer of AI now—not just the flashy demos.

So, is this as good as AI gets?

Not even close.

This is the moment where serious builders step up. AI alone isn’t enough. The leaders of the next decade will be the ones who combine AI’s speed with human oversight, engineering discipline, and long-term strategy.

At HappyFunCorp, we’re already guiding teams through this shift, from vibe coding to resilient, scalable systems. If you’re validating an idea, scaling a product, or embedding AI into your core workflows, we’d love to talk.

Because the future of AI won’t be built on hype. It’ll be built on durability.

Let's chat